Read more about the SQL*Net more data to client wait event.ġ69926130 bytes sent via SQL*Net to clientġ87267 bytes received via SQL*Net from client The throughput of such bursts depends on TCP send buffer size and of course the network link bandwidth and latency. Oracle can stream result data of a single fetch call back to client in multiple consecutive SQL*Net packets sent out in a burst, without needing the client application to acknowledge every preceding packet first. More network bytes means more network packets sent and depending on your RDBMS implementation, also more app-DB network roundtrips. This is the most obvious effect - if you’re returning 800 columns instead of 8 columns from every row, you could end up sending 100x more bytes over the network for every query execution (your mileage may vary depending on the individual column lengths, of course).

Cached cursors take more memory in shared pool.Hard parsing/optimization takes more time.Some query plan optimizations not possible.I’m using examples based on Oracle, but most of this reasoning applies to other modern relational databases too. I’ll focus only on the SQL performance aspects in this article.

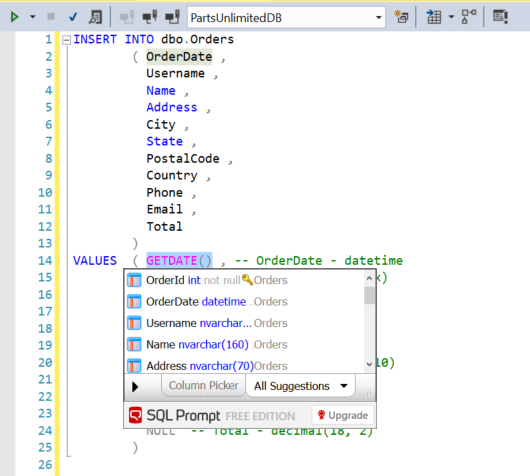

Column picker sql prompt code#

For example, will your application’s data processing code suddenly break when a new column has been added or the column order has changed in a table? When I write production code, I explicitly specify the columns of interest in the select-list (projection), not only for performance reasons, but also for application reliability reasons. Here’s a list of reasons why SELECT * is bad for SQL performance, assuming that your application doesn’t actually need all the columns. Reasons why SELECT * is bad for SQL performance